UTA researchers see opportunity to innovate as AI devours energy resources

Like it or fear it, generative AI has made routine, mundane tasks less laborious. But the downside to that efficiency is that it takes a lot of electricity and a lot of water to run and cool the data centers where AI computing occurs, straining the power grid and devouring precious resources.

As AI workflows increasingly permeate our everyday lives, this problem is getting more and more attention, and graduate students in UT Arlington’s engineering school are among the nation’s leaders in finding critical energy-saving solutions.

AI computing is exponentially more power hungry

To illustrate the size of the challenge at hand, in a report published by MIT, Noman Bashir, whose research focuses on technology’s environmental impact, said AI “might consume seven or eight times more energy than a typical computing workload.”

The same report estimated that by 2026, worldwide data center energy consumption will have increased to the point where it surpasses the energy consumption of most nations, with the only exceptions being China, the U.S., India and Russia.

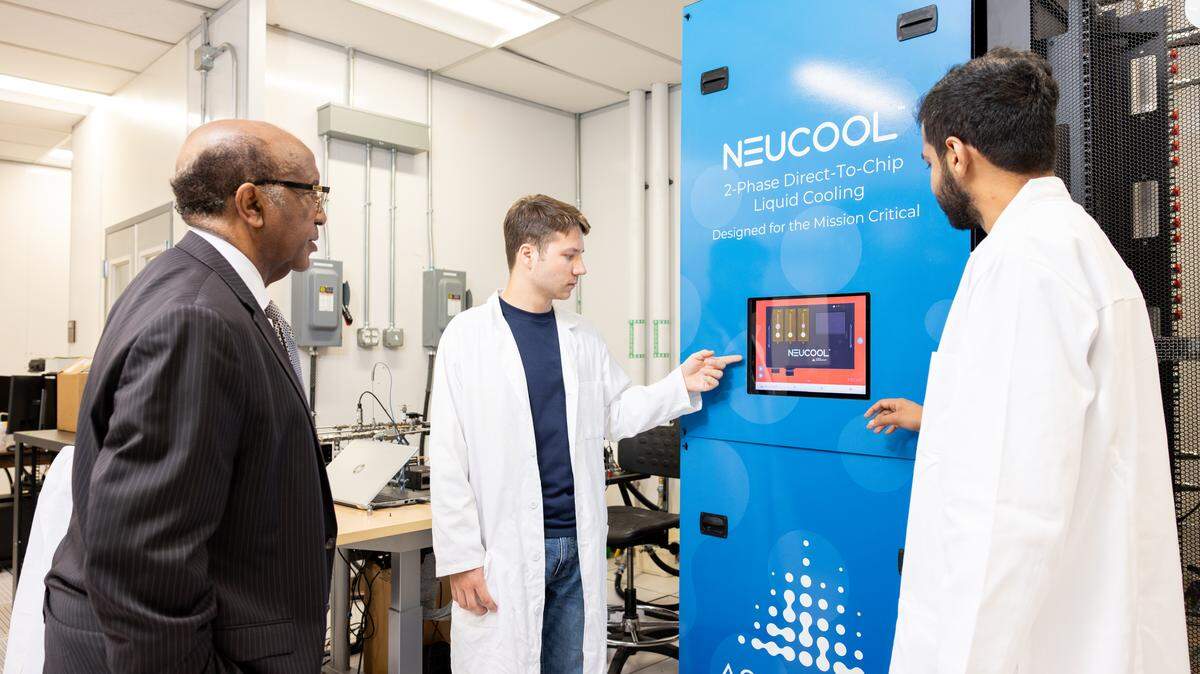

In UTA’s Nedderman Hall, which houses the school’s engineering labs, Dereje Agonafer and his Ph.D. students are working in cooperation with other institutions, like the University of Maryland, to develop more efficient ways of cooling the high-powered graphics processing unit chips that allow AI computing to happen and which generate a tremendous amount of heat.

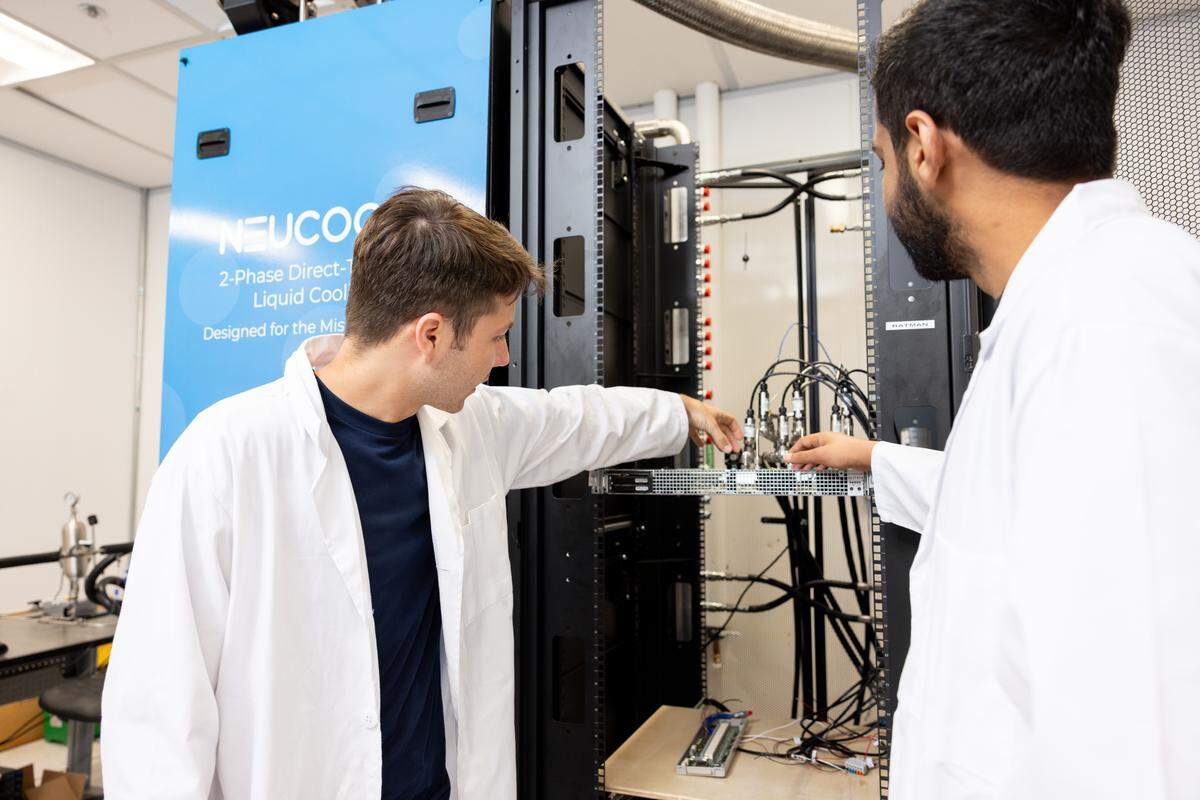

One of the innovations to come out of this research is a cooling plate that fits over a chip. A cooling distribution unit pumps dielectric fluid to the plate to cool the chip. As it absorbs heat, the fluid turns to vapor and returns, via an exhaust tube, to the cooling distribution unit where it is condensed back into a liquid form. That closed-loop process repeats itself, ensuring the chip stays below the maximum 85 degrees Celsius (185 degrees Fahrenheit) threshold.

Unlike other cooling methods, like the evaporative towers that cool many data centers, the direct-to-chip method using a cooling plate consumes no water. Direct-to-chip cooling can also be used in data centers that aren’t equipped with air conditioning, reducing resource consumption even more dramatically.

Agonafer sees solutions like this as being critical to preserving our nation’s resources. That’s especially true in Texas, which ranks behind only Virginia in statewide data center energy usage, according to a study published by the Electric Power Research Institute.

If trends continue, by 2030 data centers could account for as much as 10% of Texas’ energy consumption, doubling the data center consumption share.

As far as water consumption, Agonafer said hyperscale data centers in the U.S. — the ones powering generative AI — are incredibly thirsty.

“On the high end, we’re talking about 300 billion liters per year,” Agonafer said of the water usage outlook for 2028. “The low end is going to be 150 billion. Either way, it’s a lot of water. So any technology, like ours, which minimizes the use of water is going to be very important.”

AI advancement dictates more energy-efficient practices

Agonafer mentioned the $500 billion Stargate data center under development in Abilene — which will be one of the largest AI data centers in the world, reportedly with enough space to house up to 400,000 GPUs — as evidence of the direction AI computing is heading.

“It’s going to be a huge opportunity,” said Agonafer. “Challenges and opportunities go hand in hand. And coming up with viable ways of cooling that minimize water as well as electricity is certainly important.”

The latest and greatest GPU chip from Nvidia, added Agonafer, will have the capacity to consume 1.4 kilowatts of power. Chips this powerful simply can’t be air cooled, so it’s forcing technology companies to take a longer look at liquid cooling solutions that could ultimately be better for the environment.

These days, universities provide much of the innovation so valued by industry, and UTA has cultivated a symbiotic relationship with tech companies like Austin-based Accelsius, maker of liquid cooling cooling distribution units for high-powered computing.

Rich Bonner, chief technology officer at Accelsius, was introduced to Agonafer and his research team through a program director with the U.S. Department of Energy’s Advanced Research Projects Agency—Energy, better known as ARPA—E.

Agonafer’s chip cooling program had received ARPA—E funding, and Bonner said he was happy to share ideas and Accelsius hardware with the UTA students.

“Their teams had done a lot of very interesting work at the cold plate level,” said Bonner. “Very academic, very new, but very exciting.”

As we approach the new frontier of AI computing, Bonner said efficient power usage is a key focus, and that’s why Accelsius’ cooling distribution unit solutions combined with UTA’s cold plate technology has gained industry attention along with government money.

“What you’re hearing a lot about is your amount of computation per watt of electricity,” Bonner explained. “You don’t want to bring in 1 watt of electricity and 70% of it go into your chip and 30% go into the air conditioner, the power supplies and everything else required to keep that chip cool and fed with power. So by going to this closed-loop refrigerant, two-phase cooling approach (using liquid and gas refrigerant), we give that power back to the chip by allowing the chip to run more efficiently.”

The U.S. government wants to see this efficiency in action to ensure energy security and stability, which is why it funds programs like the one at UTA. But Agonafer said private sector companies are equally invested. For example, he pointed to data center operators that are looking at clean energy solutions like micro nuclear reactors to power their facilities in coming years, which would reduce or eliminate their reliance on the energy grid.

“These big data centers, they’re part of the community,” Agonafer said. “They’re interested in seeing the community grow. I am totally convinced that these companies, whether it’s Nvidia or Microsoft or any of them, are very interested in making sure sustainability is a factor in what they do.”